This is part 5 of a series. Part 1 is here, part 2 is here, part 3 is here and part 4 is here.

The question of whether machines can think is about as relevant as the question of whether submarines can swim.

In previous post we looked at the Semantic Web, the semantic desktop, and how it can be that after 20 years of development, most desktop search engines still provide little more than keyword matching in your files.

History shows that the majority of users aren’t excited by star ratings, manual tagging or inference. App developers mostly don’t want to converge on a single database, especially if the first step is to relinquish control of their database schema.

So where do we go from here? Let’s first take a look at what happened in search outside the desktop world in the last 20 years.

Twenty Years in Online Search

Prehistory (1990s)

- Amazon launches (1994), Yahoo! launches (1994), Netflix launches (1997), Google launches (1998)

- KDE Project founded (1996), GNOME Project founded (1997)

End of the Dot Com Bubble (2000-2004)

- Amazon publishes “Amazon.com Recommendations” paper

- Google launches Image Search, Google Store (now Google Shopping), the lucrative Adwords system, and more

- Shazam music search launched

- Apple announces Spotlight (released in 2006), Microsoft announces WinFS (cancelled in 2006)

- MySpace launches (2003), Facebook launches (2004)

- “The Semantic Web” article published in Scientific American

Start of the Smartphone Era (2005-2009)

- Apple releases the first iPhone (2007)

- Google releases Android (2008)

- dbPedia launched, providing open structured data based on Wikipedia

- Wolfram Alpha knowledge engine launched, notable for using manually curated data to answer queries.

- Netflix Prize for recommendation engines is announced and won.

- The Inform 7 programming environment is released with impressive natural language processing.

- The term “Linked Data” is introduced.

Structured Data Goes Big (2010-2012)

- Facebook launches Open Graph Protocol, aimed at integrating structured web content into Facebook

- Google, Bing and Yahoo! launch schema.org, aimed at providing structured data for their search engines

- Google announces the Knowledge Graph

- Apple launches Siri, the first mainstream voice assistant

- Ubuntu integrates search prominently in Unity, initially with integration with Amazon enabled

Natural Language Arrives (2013-2015)

- Amazon’s Alexa voice assistant debuts on the Echo device.

- Microsoft’s Cortana arrives.

- Google refocuses on natural language search in their Hummingbird update

- Google release Google Glass, to ridicule

- Apple announces the iWatch

Machine Learning, Everywhere (2016-2020)

- MyCroft voice assistant launches

- Google’s voice assistant launches

- Google Photos adds machine-learning based features to automatically tag photos and generate albums

- Data extracted from Facebook in 2013 becomes the focus of the Cambridge Analytica scandal

- Google Dataset Search launches

- Wikimedia foundation announce Abstract Wikipedia

I’m sadly reminded of this quote:

Much of the proposed value of the Semantic Web is coming, but it is not coming because of the Semantic Web.

The companies listed above spend billions of US dollars per year on research, so it’d be surprising if they didn’t have the edge over those of us who prioritize open technologies. We need to accept that we’re unlikely to out-innovate them. But we can learn from them.

The way people interact with a search engine is dramatically similar to 20 years ago. We type our thoughts into a text box. Increasingly people speak a question to a voice assistant instead of typing it, but internally it’s processed as text. There are exceptions to this, such as Reverse Image Search and Shazaam, and if you work for the Government you have advanced and dangerous tools to search for people. But we’re no nearer to Minority Report style interfaces, despite many of the film’s tech predications coming true, and Dynamicland is still a prototype.

The biggest change therefore is how the search engines and assistants process your query once they receive it.

Behind the <input type="text">

Text is still our primary search interface, but these days there is a lot more than keyword matching going on when you type something into a search engine, or speak into a voice assistant.

Operators and query expansion

Google documents a few ‘common search techniques‘ such as the OR operator, or numeric ranges €50-€100. They provide many more. Other engines provide similar features, here’s DuckDuckGo’s list.

These tools are useful but I rarely see them used by non-experts. However everyone benefits from query expansion, which includes word stemming, spelling correction, automated translation and more.

Natural language processing

In 2013, Google announced the “Hummingbird” update, described as the biggest change to its search algorithm in its history. Google claimed that it involves “paying more attention to all the words in the sentence”, and used the epithet “things, not strings”. SEO site moz.com gives an intesting overview, and uses the term Google seem to avoid — Semantic Search.

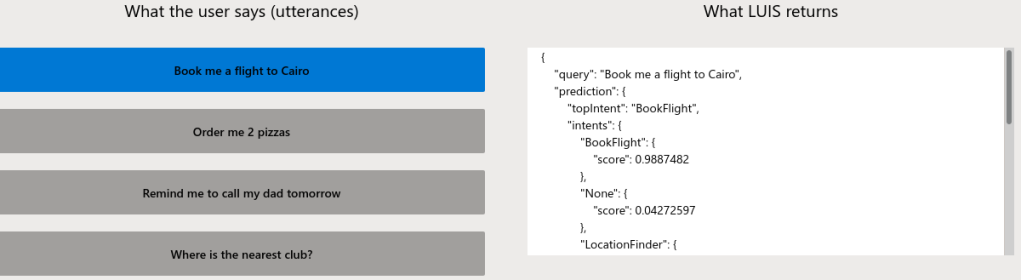

How does it work? We can get some idea by studying a service Google provides for developers called Dialogflow, described as “a natural language understanding platform”, and presumably built out of the same pieces as Hummingbird. The most interesting part is the intent matching engine, which combines machine learning and manually-specified rules to convert “How is the weather in Cambridge” into two values, one representing the intent of “query weather” and the other representing the location as “Cambridge, UK.” That is, if you’re British. If you’re based elsewhere, you’ll get results for one of the world’s many other Cambridges. We know Google collects data about us, which is a controversial topic but also helpful to translate subjective phrases into objective data such as which Cambridge I’m referring too.

As we enter the age of voice assistants, natural language processing becomes a bigger and bigger topic. As well as Google’s Dialogflow, you can use Microsoft’s LUIS or Amazon’s Comprehend, all of which are proprietary cloud services. MyCroft maintain some open source intent parsing tools, and perhaps there are more — let me know!

Instant answers and linked data

The language processing in the Hummingbird update brought to the forefront a feature launched a year earlier called Knowledge Graph, which provided results as structured data rather than blue hyperlinks. It wasn’t the first mainstream semantic search engine — maybe Wolfram Alpha gets that crown — but it was a big change.

Wolfram Alpha claims to work with manually curated data. To a point, so does Google’s Knowledge Graph. Google’s own SEO guide asks us to mark up our websites with JSON-LD to provide ‘rich search results’.

DuckDuckGo was also early to the ‘instant answers’ party. Interestingly their support was developed largely in the open. You can see a list of their instant answers here, and see the code on GitHub.

Back to the Desktop Future

Web search involves querying every computer on the Web, but Desktop search involves searching through just one. However, it’s important to see search in context. Nearly every desktop user interacts with a web search engine too and this informs our expectations.

Unsurprisingly, I’m going to recommend that GNOME continues with Tracker as its search engine and that more projects try it out. I maintain a Wishlist for Tracker (which used to be a roadmap, but Jeff inspired me to change it ;). Our wishlist goals are mostly for Tracker to get better at doing the things it already does. Our main focus has to be stability, of course. I want to start a performance testing initiative during the GNOME 40 cycle. This would help us test out the speedups in Tracker Miners master branch, and catch some extractor bugs in the process. Debian/Ubuntu packaging and finishing the GNOME Photos port are also high on the list.

When we look at where desktop search may go in the next 10 years, Tracker itself may not play the biggest role. The possibilities for search are a lot deeper. Six months ago I asked on Reddit and Discourse for some “crazy ideas” about the future of search. I got plenty of responses but nothing too crazy. So here’s my own attempt.

Automated Tagging

Maybe because I grew up with del.icio.us I think tagging is the answer to many problems, but even I’ve realised that manually tagging your own photos and music is a long process that even I’m not very interested in doing.

A forum commenter noted that AI-based image classification is now practical on desktop systems, using onnxruntime. The ONNX Model Zoo contains various neural nets able to classify an image into 1,000 categories, which we could turn into tags. Another option is the FANN library if suitable models can be found or trained.

This would be great to experiment with and could one day be a part of apps like GNOME Photos. In fact, Tobias already proposed it here.

Time-based Queries

The Zeitgeist engine hasn’t seen much development recently but it’s still around, and crvi recently rejuvenated the Activity Journal app to remind us what Zeitgeist can do.

We talked about integrating Zeitgeist with Tracker back in 2011, although it got stuck at the nitpicking stage. With advancements in Tracker 3.0 this would be easier to do.

The coolest way to integrate this would be via a natural language query engine that would allow searches like "documents yesterday" or "photos from 2017". A more robust way would be to use an operator like 'documents from:yesterday'. Either way, at some layer we would have to integrate data from Zeitgeist and Tracker Miner FS and produce a suitable database query.

Query Operators

Users are currently given a basic option — match keywords in a file via Nautilus or the Shell– and an advanced option of opening the Terminal to write a SPARQL query.

We should provide something in between. I once saw a claim that Tracker isn’t as powerful as Recoll as it doesn’t support operators. The problem is actually that we expose a less powerful interface. Support for operators would be relatively simple to implement. This could be a relatively straightforward project and could be implemented incrementally — a good learning project for a search engineer.

Suggestions

The full-text search engine in Tracker SPARQL does some basic query expansion in the form of stemming (turning ‘drawing’ into ‘draw’, for example) and removing stop words like ‘the’ and ‘a’. Could it become smarter?

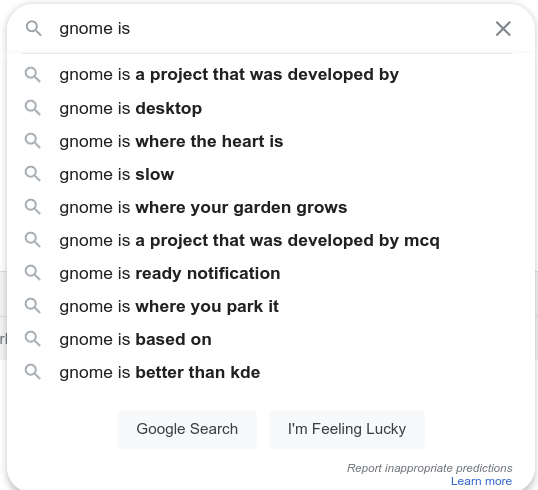

We are used to autocomplete suggestions these days. This requires the user interface to ask the search backend to suggest terms matching a prefix, for example when I type mon the backend returns money, monkey, and any other terms that were found in my documents. This is supported by other engines like Solr. The SQLite FTS5 engine used in Tracker supports prefix matching, so it would be possible to query the list of keywords matching a prefix. Performance testing would be vital here as it would increase the cost of running a search.

Google takes autocomplete a step further by predicting queries based on other people’s search history and page contents. This makes less sense on the desktop. We could make predictions based on your own search history, but this requires us to record search history which brings in a responsibility to keep it private and secure. The most secure data is the data you don’t have.

Typo tolerance is also becoming the norm, and is a selling point of the Typosense search engine. This requires extra work to take a term like gnomr and create a list of known terms (e.g. gnome) before running the search. SQLite provides a ‘spellfix1’ module that should make this possible.

Suggestion engines can do lots more cool things, like recommending an album to listen to or a website to visit. This is getting beyond the scope of search, but it can reuse some of the data collected to power search.

The Cloud

When Google Desktop joined the list of discontinued Google products, the rationale was this:

People now have instant access to their data, whether online or offline. As this was the goal of Google Desktop, the product will be discontinued.

The life of a desktop developer is much simpler if we assume that all the user’s content lives on the Web. There are several reasons not to accept that as the status quo, though! There is a loss of privacy and agency that comes from giving your data to a 3rd party. Google no longer read your email for advertising purposes, but you still risk not being able to access important data when you need it.

Transferring data between cloud services is something we tried to solve before, the Conduit project was a notable attempt and later the GNOME Online Miners, and it’s still a goal today. The biggest blocker is that most Cloud services have a disincentive to let users access their own data via 3rd party APIs. For freemium, ad-supported services it doesn’t make sense to let users use your service while sidestepping the adverts that fund it.

Another problem is that maintaining a local index of remote content is risky. It may result in unwanted network traffic — what if an indexer updates the index while the computer is tethered to an expensive 3G connection? It may result in huge local caches of documents the user doesn’t actually care about. It’s difficult to get defaults that work for everyone.

My suggestion is to make online sync features as explicit and opt-in as possible. We should avoid ‘invisible design’ here. If a user has content in Dropbox that they want available locally, they will search online for “how to search dropbox content in gnome.” We don’t need to enable it by default. If they want to search playlists from Spotify and Youtube locally, we can add an interface to do this in GNOME Music.

Another challenge is testing our integrations. It’s hard to automatically test code that runs against a proprietary web service, and at the moment we just don’t bother.

It’s early days for the SOLID project but their aim is to open up data storage and login across web services. I’d love it if this project makes progress.

Mashups, The Revenge

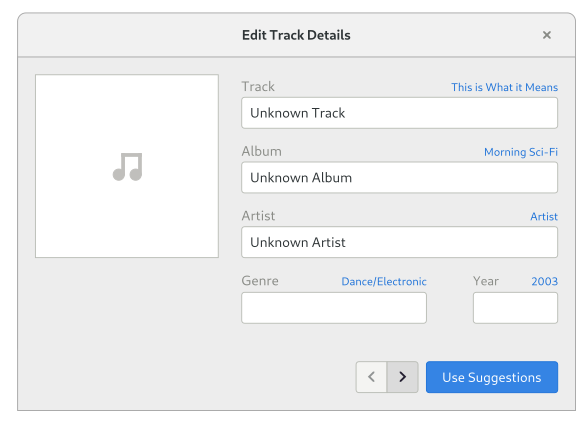

When I first got involved in Tracker, I was doing the classic thing of implementing a music player app, as if the world didn’t have enough. I hoped Tracker could one day pull data from Musicbrainz to correct the metadata in my local media files. Ten years ago we might have called this a “mashup”.

The problem with Tracker Miner FS correcting tags in the background is that it’s not always clear what the correct answer is. Later I discovered Beets, a dedicated tool that does exactly what I want. It’s an interactive CLI tool for managing a music database. GNOME Music has work-in-progress support for using metadata from Musicbrainz, and the Picard is still available too.

I think we’ll see more such organisation tools, focused on being semi-automated rather than fully automated.

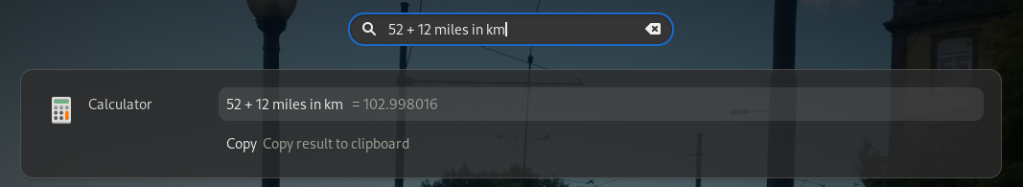

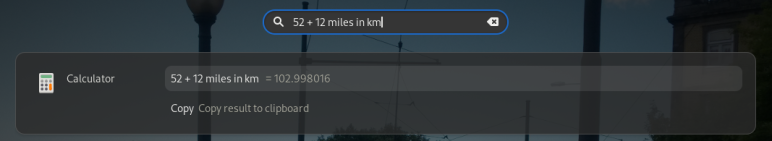

GNOME Shell search

GNOME Shell’s search is super cool. Did you know you can do maths and unit conversions, via the Calculator search provider? Did you know you can extend it by installing apps that provide other search providers? You probably knew it’s completely private by default. Your searches don’t leave the machine, unlike on Mac OS. That’s why the Shell can’t integrate Web search results — we don’t want to send all your local searches to a 3rd party.

We can integrate more offline data sources there. I recently installed the Quick Lookup dictionary app which provides Wiktionary results. It doesn’t integrate with local search, but it could do, by providing an optional bundle of common Wiktionary definitions to download. Endless OS already does this with Wikipedia.

The DBus-federated API of Shell search has one main drawback — a search can wake up every app on your system. If a majority of apps store data with libtracker-sparql, the shell could use libtracker-sparql to query these databases directly and save the overhead of spawning each app. The big advantage of the federated approach, though, is that search providers can store data in the most suitable method for that data. I hope we continue to provide that option.

Natural Language

Could search get smarter still? Could I type "Show me my financial records from last year" and get a useful answer?

Natural language queries are here already, they just haven’t made it to the desktop outside of Mac OS. The best intent parsing engines are provided as Software as a Service, which isn’t an option for GNOME to use. Time is on our side though. The Mycroft AI project maintain two open source intent parsers, Adept and Padatious, and while they can’t yet compete with the proprietary services, they are only getting better.

What we don’t want to do is re-invent all of this technology ourselves. I would keep the natural language processing separate from Tracker SPARQL, so we integrate today’s technology which is mostly Python and JavaScript. A more likely goal would be a new “GNOME Assistant” app that could respond to natural language voice and text queries. When stable, this could integrate closely with the Shell in order to spawn apps and control settings. Mycroft AI already integrates with KDE. Why not GNOME?

This sounds like an ambitious goal, and it is, so let me propose a smaller step forwards. Mycroft allows developers to write Skills as does Alexa. Can we provide skills that integrate content from the desktop? Why should a smart speaker play music from Spotify but not from my hard drive, after all?

End to End Testing

Tracker SPARQL and Tracker Miners have automated tests to spot regressions, but we do very little testing from a user perspective. It requires a design for how search should really work in all of its corner cases — what happens if I search for "5", or "title:Blog", "12 monkeys", or "albums from 2015", or "documents I edited yesterday"?

As more complex features are added, testing becomes increasingly important, not just as “unit tests” but as whole-system integration tests, with realistic sets of data. We have the first step — live VM images — now we need the next step of testing infrastructure, and ,tests.

Funding

Funding is often key to big changes like the ones I’m going to describe below. A lot of development in GNOME happens due to commercial and charitable sponsorship, where contributors develop and maintain GNOME as part of their employment. Contribution also happens through volunteer effort, as part of research funding (see NLnet’s call for “Next Generation Search and Discovery” proposals), or sponsored efforts like GSoC and Outreachy. This is often on a smaller scale, though.

I want this series to highlight that search is a vital part of user experience and an important area to invest design and engineering effort. To answer the question “When can we have all this?”… you’re probably going to have to follow the money.

In conclusion…

This summer was an unusual situation where I had a free summer but a pandemic stopped me from going very far. Between river swims, beach trips and bike rides I still had a lot of free time for hacking – I counted more than 160 hours donated to the Tracker 3 effort in July and August.

I’m now looking for a new job in the software world. I’ll continue as a maintainer of Tracker and I promise to review your patches, but the future might come from somewhere else.

Tracker sucks. It constantly indexing something but I am unable to find anything with it. Beagle forever

After signing up for gitbook , your link for DucKDuckGo instant answers “you can find out how it works in detail from the documentation” results in “You are not a member of the DuckDuckHack organization, or you don’t have permissions to access this space.” Is the explanation private?

Great summary. You left out the pre-history of meta keywords and meta description tags. I wonder what the first web page was to lie about its contents to show up in search results.

It seems like the online documentation has been made private since I wrote the article. Thanks for pointing it out, I’ll update the link!

The source for the docs seems to still be available at https://github.com/duckduckgo/duckduckhack-docs but I guess I will just link to https://duck.co/ia

I don’t know which was the first place to lie about ‘meta’ tags but i doubt it took very long! 🙂

Given Tracker’s apparent purpose in life… your Prehistory should also mention the Glimpse Indexer from 1994. Still available today. https://manpages.debian.org/testing/glimpse/glimpseindex.1.en.html

originating via

https://www.usenix.org/conference/usenix-winter-1994-technical-conference/glimpse-tool-search-through-entire-file-systems

In the 90s I loved using it combined with mh single-file-per-message email folders. That combo basically provided what we take for granted with gmail search today.

Thanks! I wasn’t aware of Glimpse, what an interesting piece of history.